Building Create Your Reality Age (CYRA) - Spatial Computing App/Agent/Benchmark for AgentBeats Hackathon organized by UC Berkeley's Agentic AI Course

January 31, 2026

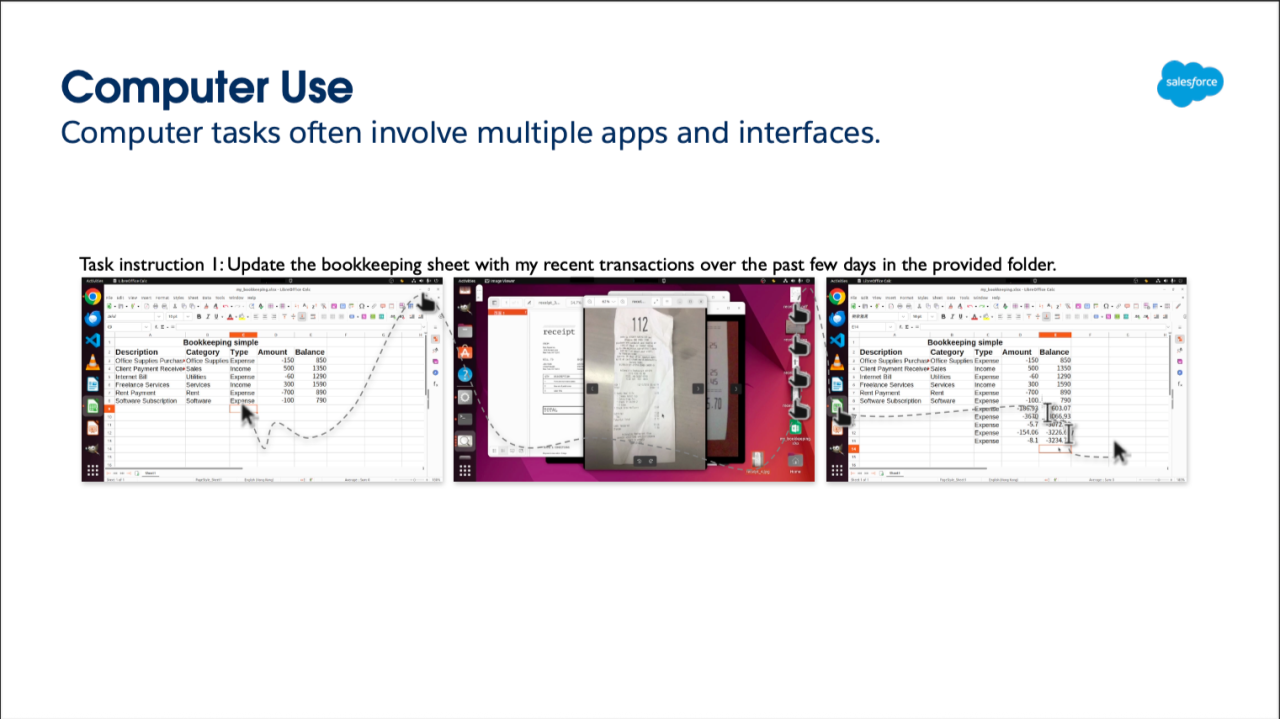

Screencap of the next version of TimeCake (what would go on a KanBan board, but different than RealityTasks app)

Building on the UC Berkeley F25 Agentic AI MOOC with Professor Dawn Song, I just wrapped up my Green Agent submission for the AgentBeats hackathon! The journey from course concepts to production code has been intense, educational, and deeply rewarding.

What I Built: CYRA (Create Your Reality Agent)

CYRA is a visionOS-native agent evaluation framework designed to extend agent benchmarking into spatial computing environments—something that existing benchmarks like OSWorld, WebArena, and τ-bench don't address. While traditional benchmarks focus on browser-based or API-centric tasks, CYRA evaluates how agents perceive, reason, and act within immersive 3D, multimodal native spatial computing/AR/VR interfaces.

The technical architecture combines:

Swift-based spatial UI for Apple Vision Pro (visionOS)

FastAPI backend orchestrating task dispatch and deterministic evaluation

MCP (Model Context Protocol) integration ensuring reproducible, deterministic agent-to-agent communication - // I'm still building this as I release this updated LinkedIn article FYI

A2A (Agent-to-Agent) protocol compliance for cross-platform interoperability, eventually hoping to add AP2 for payments (finance agent) - // something I've been wrapping my head around along with AP2 since end of last year with a lot of announcements by Google, Coinbase, and Edge & Node that are really exciting for decentralizing fintech

Lambda.ai cloud storage for full-trace telemetry and reproducible benchmarking

Course Concepts → Production Reality

The lectures from Sida Wang (Meta AI Research) on establishing rigorous agentic benchmarks and shaped my approach. Wang's emphasis on deterministic scoring and reproducible evaluations made me think critically about what the backbone of CYRA's Green Agent referee system should ideally look like—every state transition, function call, and spatial interaction is logged for post-hoc analysis and replay.

MCP is critical for achieving the deterministic communication patterns. For now, I started with loose API integrations. to prove that the data capturing any user or agent interaction would be traceable. MCP ensures that agent interactions are verifiable, traceable, and reproducible—essential for building evaluation frameworks that can stand up to rigorous scrutiny.

The GameAI and reinforcement learning modules in the course as an AR VR MR XR/spatial computing practitioner resonated with me the most also influenced my design. Rather than treating AR VR MR XR/spatial computing as just another UI layer, I'm approaching it as an embodied agent environment where spatial task competency becomes a first-class evaluation dimension—similar to how RL agents are evaluated on state understanding and action selection.

Key Wins and Real-World Challenges

The MVP focused on getting the core evaluation loop working: text-based task creation (this took longer than expected to build. Eventually will have much more details on state matching, action assertions, and deterministic scoring. I had to scale back from the full multimodal vision (speech-to-text and VisionKit/CoreML integration where I was attempting to emulate all forms of capturing human data that an agent could emulate, from voice, and literal data input (typing from a keyboard on a Vision Pro screen or on a computer/iPhone) as well as an agent attempting to mock what a human would do manually capturing a handwritten task on a physical paper (this is a part of a whole other separate productivity app I am building. But to meet the Phase 1 deadline, I decided that those features should branched and ready for Phase 2 instead, and post hackathon will still continue to iterate on with user testing in a TestFlight beta with other users I have been conducting user research with overtime to help shape what kinds of productivity apps would help them most.

Some of the technical challenges I didn’t anticipate had less to do with actual advanced reasoning, but more of the everyday engineering challenges any software goes through, and where we underestimate how much time we could spend debugging the simplest tasks in our code:

Debugging local IP configurations and Xcode simulator quirks taught me about the gap between development and deployment. Also using XCode integrated with ChatGPT is not bad, but I went back and forth using Cursor and reading through my documentation.

Implementing Docker containerization for the AgentBeats registry forced me to think about portability and reproducibility from day one

Task fetching and state synchronization between Swift and FastAPI revealed edge cases in async communication patterns

The MCP Learning Curve

One of my biggest takeaways was implementing MCP tool definitions and ensuring A2A-compliant communication. The protocol's strict schema requirements initially felt constraining, but they enforced exactly the kind of structured thinking that makes evaluations reproducible. This aligns perfectly with the course's emphasis on verifiable benchmarks—you can't evaluate what you can't measure, and you can't measure what isn't deterministic.

Looking Ahead: Phase 2 and Beyond

The hackathon proceeds in two phases. Phase 1 established the Green Agent as the environment manager and evaluator. Phase 2 will introduce a competing Purple Agent to execute finance-oriented workflows and transactional tasks.

If time permits and I get everything working, the ideal would be to have a proper benchmark testing cross-platform compatibility and A2A protocol interoperability across Meta Quest devices for a similar task creation app. Even though Meta has tanked its enterprise business, the Meta AI glasses are one to watch for, and I’ve been asking the Llama team repeatedly about their release of open source SDK for 3rd party developers to be able to do more meaningful AI application development on the device, which has yet to come (I asked simple questions like combining data from Golf apps in AR with another one for a user to be able to see a basic data visualization dashboard or chart, and while Llama’s product director, Joe Spisak, thought it

maybe could be feasible and that he might connect with me again in the future to talk about this more when I ran into him at PyTorch Conference last year (while he was in deep conversation with the PyTorch Founder, Soumouth Chintala), it seemed like something farther down the pipeline for Meta, even though I also spoke with some members of the

ExecuTorch team about how I’d target to go low-level for getting the raw data I’d need for the TaskSchema, or overall layer for all my data input/capture (from speech-to-text/voice, computer vision if using the equivalent of VisionKit in Apple with Meta's frameworks).

Unlike Meta, I think developing for Apple might be easier since it's more of a vertical stack, and even though many people don't consider Apple friendly to open source, there's a lot more that was easy to think about down the pipeline for what I'd like to integrate eventually in terms of data visualization, dashboards, and combining all types of input (utilizing AppIntents) and other Apple frameworks. It's much more also relatively simple to pre-populate data with the HealthKit API through existing data you would have there for anything beyond your task logs (what I had built with this initial hackathon with a native app). You would ideally be able to visualize fitness dashboards combined with other types of data, something I think that could be useful to consider for consumer apps like finance.

I tabled this unfortunately even if I really wanted to work on multi-agent systems, but wanted to make sure I wasn't overextending myself. The finance agent (as the second major agent outside of productivity which I built the initial app for), I wanted to add on, using Ampersend SDK (from Edge & Node) if not

Fetch.ai (I had been evaluating what web3/blockchain/crypto payment frameworks folks were using to have decentralized payment systems like Skyfire). The intention would be able to show how an agent can take care of a simple payment task. Skyfire demonsrated this at their recent even at Google which utilized A2A and AP2. For me, I thought of using the ApplePay API to facilitate this or with Ampersend natively if possible. But for now I thought that it was too much to build for this hackathon even with an extended timeline. It would ideally be two whole other projects to integrate to have the ideal benchmark i would still want, which would include building a proper finance agent. It would also consider a whole other data visualization dashboard I’ve been dreaming of building for the larger productivity app for ages, that would have the Speech-To-Text and computer vision capture features (of data/tasks a human would have) which I described earlier. But these are 'nice-to-haves' (non-essentials) given I was still learning more about the A2A and AP2 protocols and how they'd integrate with MCP plus everything in this course. All of that would require much more time to develop and again 2 whole other projects for this to be something that works in production with real users that I'm already working with for the productivity app for the Create Your Reality brand, TimeCake (originally conceptualized with a different hackathon for Apple Vision Pro, I've since scrapped and wanted to refactor entirely since Apple came out with 3D data visualization library last year,

Swift Charts, but oddly still does not have a 3D pie chart).

Beyond the hackathon, CYRA represents a foundation for evaluating agentic performance in embodied, multimodal environments—a critical gap as spatial computing becomes more prevalent. The multimodal CUA (Computer Use Agent) benchmark for AR VR MR XR/spatial computing environments is the long-term vision, enabling privacy-safe evaluations of agents that interact with immersive interfaces.

Connecting Theory to Practice

What makes this project meaningful is how directly it applies the course's core principles: reproducible evaluations, deterministic scoring, multi-agent systems design, and rigorous benchmarking methodology. Professor Song and the guest lecturers provided a framework for thinking about agent evaluation that goes far beyond implementation details—it's about building systems that can be trusted, verified, and built upon by others.

For me, just building the app was a lot of work given that when I built it I experienced several deaths in the family over the last 3 months, regardless it was rewarding to work on a small project that challenged to me think through the complexity of utilizing multiple frameworks with proper thought into information architecture with MCP and UX/UI constraint feasibility given time and capacity.

// This is still rough folks (so I'll be updating this repo still after Jan 31. I'm still building the MCP stuff right now and trying to meet the Jan 31 deadline for getting the Agent Registry dashboard (to make sure this is reproducible. I'm also still going to aim to develop for Part 2 of the hackathon for the Purple Agent AND the whole other TimeCake app to get those speech-to-text and computer vision capture features by a human (and an agent) for the productivity app as a whole. So brace yourself if this isn't all complete since I have my multiple parts of this project working at different stages of progress I haven't shown yet all here.

#AI #ArtificialIntelligence #AgenticAI #AgentBeats #UCBerkeley #ReinforcementLearning #GameAI #SpatialComputing #VisionOS #MCP #AgentEvaluation #MachineLearning #ARVRDevelopment

This content above is completely dynamic using custom layout building blocks configured in the CMS. This can be anything you'd like from rich text and images, to highly designed, complex components.