In this issue I talk about

- DeepSeek

- AI Perspectives from e/acc, d/acc, doomerism and n00b

- AI x XR Workflows - my other favorite subject

AI Philosophy Overview

I promised that in this issue, I would feature more details on DeepSeek, what the

R-1 paper means for consumers and software engineers both technically and for the general market - what can be interpreted as “racist,” and what is “pro-America” at a time when the anti-TikTok sentiment also is prominent within the U.S. and what this all means for you.

Below I quickly, l talk more about various philosophies on AI, I’ll focus mainly on the first 3 and leave out the rest mostly since it’s less practical and concrete to discuss, with the exception of #5 which is a good chunk of people.

Terms

- AI Opportunity a.k.a. (e/acc) (AI Optimists) - AI Optimists who want to accelerate the future with AI everywhere. Often times, this folks are those advocating for AI to be deregulated everywhere.

- Decentralized AI a.k.a. (d/acc) - AI pragmatists, who want to accelerate the future but with decentralization. Many of these folks come from the crypto/blockchain/web3 community focused on distributed computing vs

- Doomers - AI skeptics/pessimists who like AI, but have a very good amount of fear around safety and advocate heavily for AI regulation. Many AI Optimists label these folks as people who are unnecessarily worried and afraid AI will kill humanity or have a lot of fears surrounding the risks of AI and AGI (Artificial General Intelligence) or General AI. vs

- Unrealistic AI haters - A lot of anti-tech folks who think that because tech is capitalistic and it sucks, so we should all be monks that live on a farm and stop extracting metal from the earth) vs

- AI consumers but are non-technical non-creators (people who don’t make the tech other than understanding how to make genAI with DallE or midjourney) - Folks in the middle who like AI, but are non-technical and have no idea what is going on under the hood and probably hear jargon, get scared, and everything goes over their head, that they believe Netflix movies like Subservient (Megan Fox - who also happens to be part Filipina American btw) and AfrAID (John Cho) as the new Terminator are real and coming in thousands of days because Sam Altman said that AGI will be realized.

- AI n00bs - Everyone else in between that has no idea what is going on and probably only learned how to use ChatGPT yesterday.

Personally I feel that e/acc and d/acc are more like siblings than enemies, and d/cc and doomerism homies on the “AI Safety front.” At the same time, I do not feel we are at the point of AGI (even by thousands of days), as the human brain is so complex, we cannot even understand neurodegenerative brain disease and how to store memory in the human body, in vivo is vastly different than in silico.

Outside of the laughable bid by Elon Musk to buy OpenAI for $97B, Andrej Karpathy (former head of AI at Tesla, whom I met when he was recently hired into that company, and am huge fan of, and also known for his long list of accolades, including OpenAI founding team,) has also tweeted recently about Groq and xAI performance, attempting to dethrone OpenAI and DeepSeek ask the perceived leaders in AI in the world.

The DeepSeek Paper

the tl;dr

What this means for tech founders, investors, and software engineers:

- Did DeepSeek successfully utilize Parallel Thread Execution (PTX) to become the moat that will overtake Nvidia’s moat (Cuda)?

- What will replace transformer model architecture?

- How many GPUs do you actually need to perform benchmarks (tasks that are considered State of the Art) to meet consumer demand?

- Is China beating the United States in the AI arms race?

tl;dr: DeepSeek (Chinese AI company, funded by their VC fund) claims to have a more performant model than OpenAI’s “latest model,” claiming a significantly lower amount of spend, making it better, faster, and cheaper than the latest GPT (Generative Pre-trained Transformer) , OpenAI’s model, which consumer know as what powers ChatGPT. Everyone is worried that AI companies United States are not doing as well as we thought, with many people totally freaking out about this, dethroning the United States as the #1 place in the world for AI.

My personal take: Everyone has been overreacting. OpenAI is still likely having competitive models internally and there are only benchmarks that people are comparing publicly on models to me, that aren’t considered “the latest.” Models get released what feels like every week or something now, so I did not take too much concern about it, however the speculation on Nvidia stock peaked my interest at first. Additionally, the

lecture by Russ Salakhutdinov (Meta’s VP of Research) at UC Berkeley’s LLM Agents (Advanced) course on reasoning cited that the same methods they know OpenAI is using from

repeat sampling and other methods is also used by DeepSeek. Everyone’s on the same tip here, so you will be at the edge of your seat every week watching which AI company will one up the other next with their latest model, feature, or proclaimed benchmark blows everyone else out of the water. It’s an exciting time to watch.

What this means for the average consumer: AI will get cheaper and more investment will come in from private capital and government in an arms race to keep the United States #1 in the world and on top.

What else is missing from this conversation - an Asian and Asian American perspective

I recommend listening to the recent episode of the

All In Podcast with David Sacks (known as the AI Czar in this administration and formally the President’s Council of Advisors on Science and Technology) and whose venture fund, Craft Ventures invested in XAI, another AI company founded by Elon Musk) in particular.

Was DeepSeek’s ability to bypass its use of Nvidia’s CUDA for real (where its moat is as a business)?

He discuses all the other details about how many GPUs (Graphics Processing Units), H100s from Nvidia were actually used to train models and data to meet these benchmarks and how realistic it is that China optimized everything given their lack of access to this hardware, buying it through Singapore. Sacks says it is

“hard to validate how much compute was actually spent on DeepSeek 6M for final training run....Final training cost was more tens of millions of dollars, 9-10 months ago “it’s not 6M vs a billion (dollars).”

While I know many of my friends dislike the Trump Administration, it is very clear that a significant amount of investment into AI changes to cryptocurrency policies to be much clearer is realized once Trump took office, something that even I and other tech Democrats had been frustrated about.

As a side note, I do personally believe that there needs to be some sort of regulation and guardrails with AI so it doesn’t go completely berserk, however it is important to pay very close attention into how it is being regulated more specifically.

I highly recommend reading

the Batch, the newsletter from Andrew Ng (Stanford Professor, Co-Founder of Coursera, ex-Google and ex-Baidu) to get a very nuanced position into AI policy, he probably has one of the more balanced positions in Silicon Valley that is not quite the most extreme version of effective acceleration or “e/acc” to or decentralized accelerationism " or “d/acc'“ (what crypto maxis like to use to accelerate decentralization as a whole) to the maximum, or

doomerism as Marc Andreessen has said in the past, but points specifically to which parts of legislation, even the California state-based legislation about AI last year make sense, and which ones don’t for all the extremely detailed technical reasons you can find in this post.

You may have seen some anti-China and pro-America remarks that some people have labeled “racist,” saying that we should only be supporting AI companies founded in the United States by “American” founders, similar to the sentiments against TikTok (and even though some of the same underlying criticism surrounding “surveillance capitalism” applies to any other United States founded and based social media application).

Personally, I have noticed some East Asian American and South Asian American friends say we are all underestimating China, which tends to copy every technical innovation they find very fast, very cheap, and quite well. Much of the free/open source software (F/OSS) community is thrilled about DeepSeek because the cost to do their work will be much cheaper.

The Technical Jargon Skinny - For Software Engineers, Data Scientists, and Tech Investors

It’s important to note that both Sam Altman (whom I have had the honor of meeting years ago when he was first starting off as head of YCombinator, known as YC, the world’s premiere tech startup company accelerator program), co-founder and CEO of OpenAI and Mark Chen (Chief Research Scientist of OpenAI) both note their initial positive sentiments in their tweets in response to the DeepSeek craze.

How DeepSeek Claims To Be More Performant

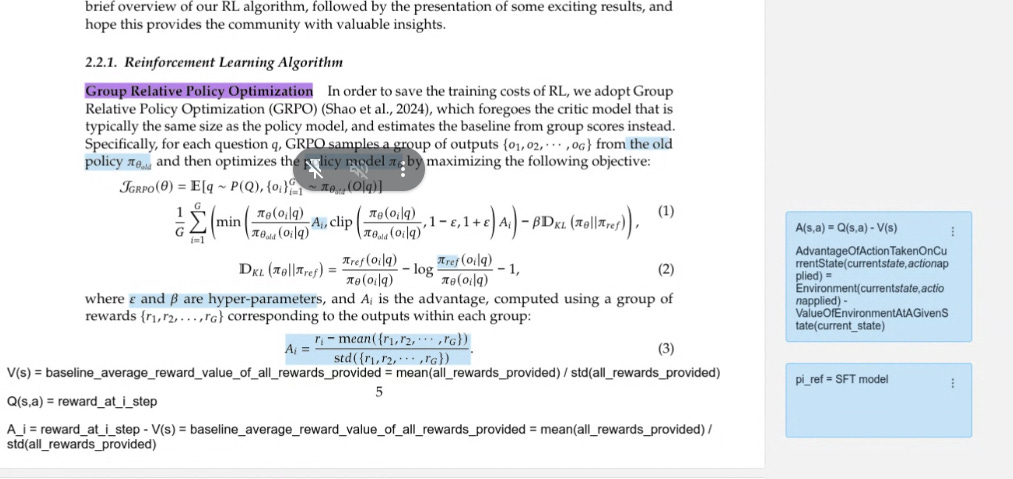

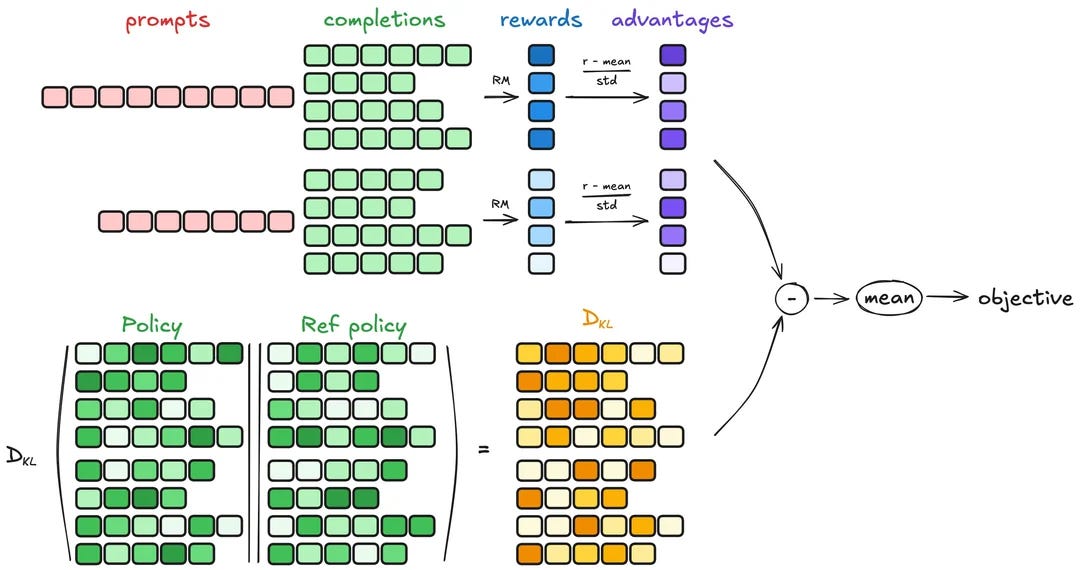

Is DeepSeek that much more efficient than OpenAI O1 Model through the optimization technique, GRPO (instead of Reinforcement Learning with Human Feedback - RLHF)?

Group Relative Policy Optimization GRPO (DeepSeek’s method as a replacement for PPO - Proximal Policy Optimization).

PPO has been the dominant way that RL - or Reinforcement Learning (for those that don’t know, it’s the basis of game AI, and how an AI bot can beat a professional chess or poker player, the dominant algorithmic method in a rewards based system for quite some time). What DeepSeek claims is that their method GRPO is better, without using their reward model, making it more efficient. There has been much debate about which algorithm, DPO (Direct Preference Optimization) is more or less performant than PPO and GRPO is now the thing that everyone is paying attention to, in addition to PTX, which would kill Nvidia’s moat via Cuda.

The DeepSeek R1 GRPO architecture as seen on Reddit Was DeepSeek really more performant than OpenAI’s models though through PTX?

What about xAI’s Grok?

Evaluation from others including one of the folks I totally fangirled in 2018 upon meeting him, Andrej Karpathy (former head of AI at Tesla, Stanford PhD, and OpenAI Founding team) cited his

results upon the using a beta versa of Grok. My brother texted me the other day raving about Grok’s capabilities to make games very quickly with their model. However, this is is common already and has been around. And now we also have Manus. Too many Chinese companies to talk about now!

Long before Grok, using a combination of Claude and Cursor, my friend Meng To showed his son how to create 3D games using

React Three Fiber.

Meng impressively has also created a web-based video editor with it as well. I recommend checking out his course at

designcode.io which walks through the most easy-to-understand designer-first-speak tutorials on AI showing how to use Anthropic’s Claude with Cursor, and does a good comparison with other tools for creating new web and native mobile iOS experiences.

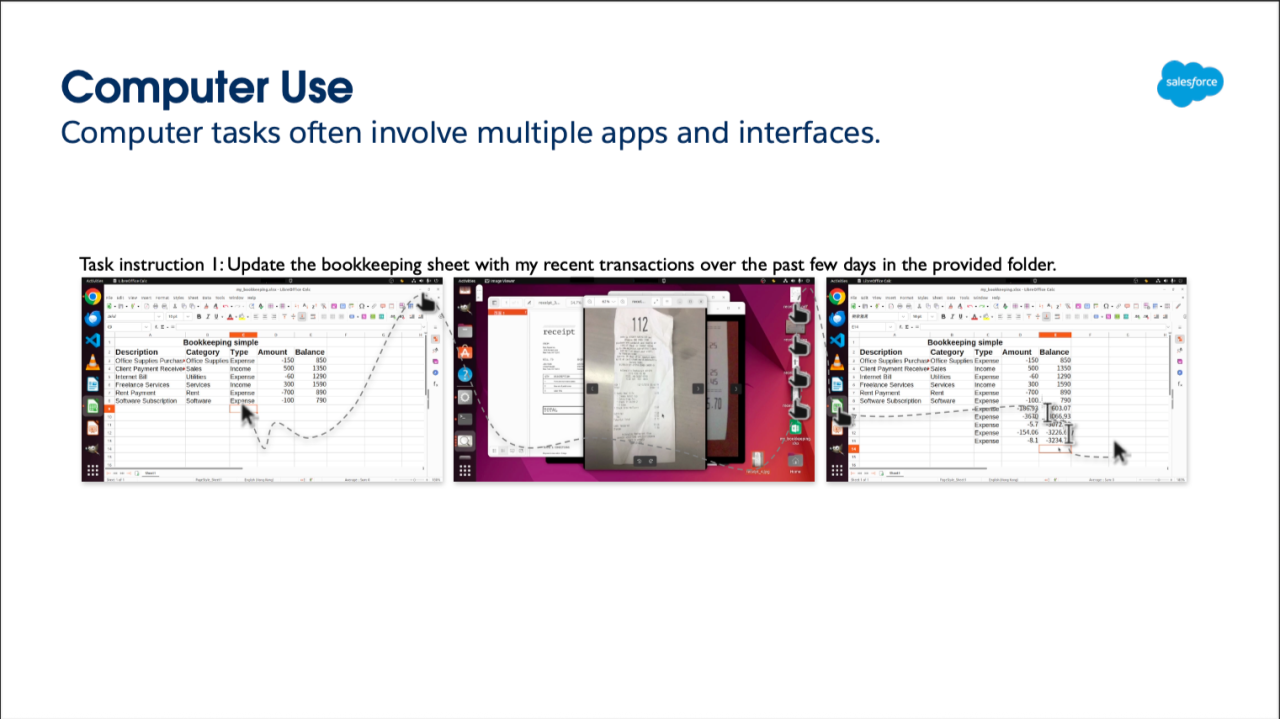

Multimodal Models: AI x XR Workflows for WebXR, Apple Vision Pro

Now, a lot of you follow me because of my work in Augmented Reality (AR) Virtual Reality (VR) Mixed Reality (MR) eXtended Reality (XR)/spatial computing having published an entire book years ago on the subject. Over the years I’ve delved back into the web3 rabbit hole and deeper than ever into AI, and spent time in rediscovering my love for content creation. I’m back in full force and plan on releasing a beta soon (beyond Test Flight I hope) for a productivity x AI app I’ve been working on for Apple Vision Pro and AI tied to physical real world objects (a paper planner in the mix), which is a part of the Creating Your Reality brand tying in self-development, productivity, and tech. Below I tie in how all this AI works in tandem and enables the future of XR, and here we’ll start with basic tooling utilizing generative AI, like 3D asset generation.

I thought that it might be too early when speaking last summer with Andrew Ng’s deeplearning.ai staff, since no one had quite cracked the code yet in utilizing AI to build out AR VR MR XR/spatial computing experiences, the most it could do was create a 3D asset (which Meta/FAIR/MAIR more correctly has done as you can see from their

VFusion3D paper, demonstrated with the video of text-to-2D-to-3D Pikachu asset below).

Essentially, we are redefining Human Computer Interaction (HCI), the way people interact with computers by leveraging the latest AI foundation models to be more human and increase accessibility of actual humans to be able to better create, play, and connect.

Here you type a prompt, it creates 2D image, and that image can become a GITf or other 3D file, this has been a common trend in the last year or two.

Last year, I though the most I could contribute, but would still be very manual for me to program and customize was creating a 3D visualization for vector database, Chroma that could work in Virtual Reality (VR), or a similar library for

Phoenix Arize (which has some of the prettier data visualizations out there in the machine learning engineering/data scientist community) in terms of contributing to F/OSS (Free and Open Source Software) projects.

At the same time, my friend, Jason Marsh, who has been at it for years on his

data visualization company, Flow Immersive, also created workflows that would work on Apple Vision Pro before its big release. He used the ChatGPT API, Whisper to be able to have users create more voice-based interactions for their data visualization that initially worked on Meta Quest 2-3, and would have these also working on Apple Vision Pro.

Runway Labs came out with their Apple Vision Pro - video capture to style transfer video here you can see their CTO Anastasis Germanidis speak about it at the Ray Summit keynote last year.

Then in the last few months, Fei-Fei Li (Stanford Professor, former Chief Scientist of Google Cloud, Mother of AI and also known for her seminal work on ImageNet) came out with her World Labs foundation model demos focused on 3D environments, with some

cool videos you can watch here.

But in recent days, we can see XR being made much more easily. Below, you can see how I used Anthropic’s Claude to create me a calibi-yau manifold (one of my obsessions is making Virtual Reality programmatic art, and things inspired by string theory and quantum physics for fun), but in VR and it came up with this.

This by far was one of the more sophisticated responses I got among all foundation models, and I wasn’t even using Anthropic’s Claude Artifacts or OpenAI’s ChatGPT Canvas, this came by way of typing in the free (not paid) version of Claude. You could directly interact with your creation and view the code in the consumer app, so bravo to Anthropic for being able to make this accessible to the average person, vibe coding is something we can all do now to some degree.

And then for those that don’t want headsets, but want products created by other AR VR MR XR/spatial computing developers, my fellow Oculus Launchpad alumnus, Charles Niu, created this really cool browser demo that has an AI agent adapting to your browsing behavior to make your experience much more fun.

See the demo video on YouTube.

These are all really exciting things happening at the intersection of these two technologies. I can’t wait to see more and until Meta, Google release APIs and SDKs for their AI/AR glasses to be able to do much more with NLP and computer vision (and RL) with game. I also loo forward to the long awaited Apple release for AR/AI glasses. For years (since like 2015/2016), I have waited every year for Apple to release a headset, and now that we have Apple Vision Pro (which I still believe is crisp, but highly expensive and more of a developer kit than a consumer ready device), I’m hoping that they’ll have something to compete with Meta. And if all this fails, maybe we will have contacts or public holographic displays for those that don’t want anything on their face that care a lot about privacy.

Either way, the future of front-end interaction and design to me, is still direct interaction/manipulation of assets, to help enhance our senses more holistically. The current moment and future of back-end engineering/programming is still automation utilizing AI as a way to aid/augment (not completely) replace humans.

Me and my friend VR and game dev OG Nicole Lazzaro at VisionDevCamp hackathon last year.

Me moderating a panel in Oakland for FIrst Fridays (the inaugural one) for Kapor Capital on Mixed Reality, Art, and Blockchain way back when!

Hire me to speak

About Erin

Erin Jerri Malonzo Pañgilinan is a software engineer and computational designer. She is an internationally acclaimed author, publishing Book Authority’s #2 must-read book on Virtual Reality in 2019, O’Reilly Media book, Creating Augmented and Virtual Realities: Theory and Practice for Next-Generation of Spatial Computing, which has been translated into Chinese, Korean, and distributed in over 2 dozen countries.

She was also previously a fellow in the University of San Francisco (USF) Data Institute’s Deep Learning Program (2017-2018) and Data Ethics Inaugural Class (2020) through fast.ai.

She is currently working on her next books, applications, and films.

Erin earned her BA from the University of California, Berkeley. She is a proud Silicon Valley native.