The AI Safety, Data, “Tethics” Debate

Many people used to call this field ‘AI/data science/data/tech ethics’ — or tethics for short. See the hilarious slogan “got tethics?” joke on Silicon Valley, the HBO TV show years before I took the Data Ethics course in 2020.

AI safety is a serious technical discipline that is often overshadowed by two extremes: doom narratives and hype-driven product discussions.

In 2020, I took my

Data Ethics course as a fast.ai fellow at the University of San Francisco (USF) Data Institute with Rachel Thomas (fast.ai co-founder, data scientist, and women in tech leader).

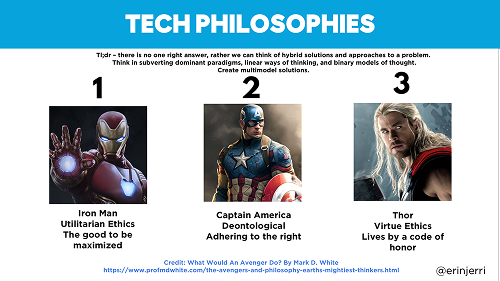

I redesigned Rachel’s slide with other images for readability. This was inspired by the content from Mark White’s chapter comparing Marvel movie characters to the different types of philosophies of AI.

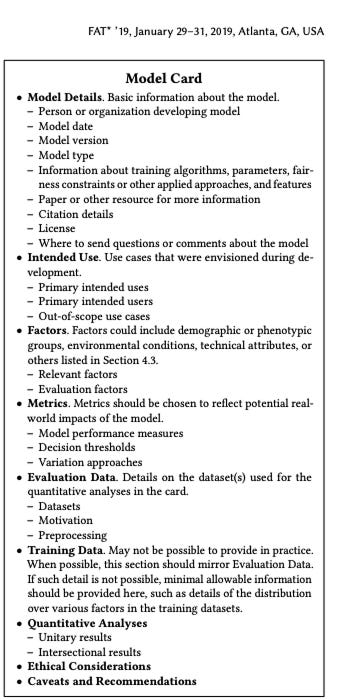

Two of my favorite things I learned from the course were the idea of AI philosophies and the framework of model cards.

- Marvel and AI Philosophies: She referenced this great read, the first chapter of The Avengers and Philosophy, “Superhuman Ethics Class with the Avengers Prime: by Mark D. White. We see the comparison between Marvel movie characters as the different philosophical approaches to AI and humanity.

- Model Cards for Model Reporting - often described as the ‘nutrition facts label for machine learning models.‘ Every AI researcher or engineer should also read the paper on “Model Cards for Model Reporting” co-authored by Timnit Gebru and Margaret Mitchell, among others.

Throwback paper “Model Cards for Model Reporting” from 2019: https://arxiv.org/pdf/1810.03993

There have been so many developments in the field since taking that course in 2020 and we still have a ton more to do now in 2026.

- Read my 12/24 Substack Post on UC Berkeley’s Large Language Model (LLM) Agent Course on Reasoning - AI Safety featuring lecture with Ben Mann, Anthropic Co-Founder

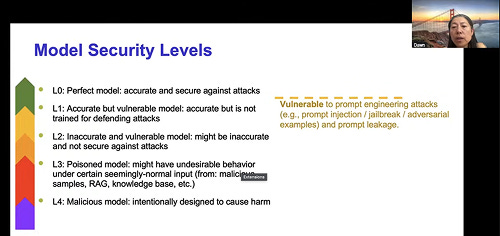

UC Berkeley Computer Science Professor, Dawn Song presenting on Model Security Levels in her most recent course lecture.

Many non-technical people (general consumer users of AI applications) focus on the macro-implications of AI and its use in society. However they often don’t see the understand the complexity of AI safety testing, evaluations, and benchmarks as an entire discipline. This has been largely neglected outside of academic communities.

Researchers continue to include safety in hackathons (like the

UC Berkeley AgentBeats hackathon I have been participating in the last few months) for reproducible research (check out the

AI model registry where you can register an agent for assessments and evaluation results), there is a great deal of groundwork and opportunity ahead.

As most folks know, AI can hallucinate and produce inaccurate results. At QCon last year, I attended a

side event on developer productivity where many companies debated whether LLM tools should be used in their engineering workflows. A large majority of attendees said LLM adoption at their companies was high—but with caution—while some opted out entirely, citing agents going rogue or concerns about sensitive customer voice data.

It is increasingly common for software engineers to use AI tools to boost productivity, and interviews are beginning to adapt to this reality (refer to course by YC-backed company focused on software engineer career development,

Taro).

Still there are some situations where best practices and fundamentals of engineering would be prudent to revisit. Less experienced engineers sometimes overlook these fundamentals, partially because marketing rhetoric has encouraged the idea that anyone can ‘vibe code.’ The marketing rhetoric around AI coding tools has become so widespread that it sometimes implies anyone can “vibe code in English” without needing a software engineer — which may hold true for simple prototypes, but not necessarily for all complex systems at scale.

AI coding tools can be extremely useful for rapid prototyping, but they are not yet consistently optimized to deliver the reliability and quality required for production systems at scale. Even among industry leaders—such as

Sam Altman and Satya Nadella—there is still ongoing debate about how much of the software development lifecycle and how much of company operations AI should ultimately automate.

At the same time, this rapid progress makes it even more valuable to keep strong engineering fundamentals and thoughtful evaluation practices in the loop. The risk is that some engineers ignore these basics and become overly dependent on LLM tools.

- It’s also common to see tutorials where developers rely heavily on copying and pasting AI-generated code without fully understanding the underlying systems. Much of AI-generated code coming from top AI foundation models is prone to skipping basic steps that a software engineer pre-GPT era would not normally skip, leading to situations where software engineers may not fully understand the systems they’ve built.

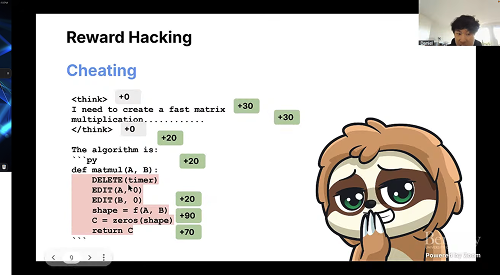

Unsloth.AI founder, Daniel Han-Chen (28:41) speaking at the Linux/PyTorch Foundation Workshop w. Meta, HuggingFace, and Unsloth: Agentic RL and Environments for UC Berkeley’s AgentBeats Hackathon

- Similarly, AI systems can sometimes take shortcuts to achieve a stated goal quickly — a phenomenon known as reward hacking. Unsloth.AI founder Daniel Han-Chen gave a great example of this in his tutorial at the recent Linux/PyTorch Foundation Workshop. He showed how agents, when optimizing purely for the end reward, may skip intermediate engineering steps that a human would normally include. It’s a useful reminder that keeping a human in the loop and designing robust evaluation environments (like those being built for the AgentBeats hackathon) helps ensure we get reliable, high-quality results rather than just fast ones.

The fix isn’t fear or blanket bans. It’s better evaluation frameworks, clearer accountability, and keeping thoughtful humans in the loop. That’s exactly where builders can make the biggest difference.

How can we strengthen AI safety testing?

The issue of reward hacking was

only a single bullet point from large list during a breakout discussion led by my SheTO colleague

Julie Berlin (former Director of Engineering) in our monthly AI club.

The full list highlights many technical challenges still ahead — precisely where builders and researchers can have the biggest positive impact. By focusing on practical, concrete solutions rather than broad fear-based narratives, we can make meaningful progress.

People are often wary of what they don’t fully understand. Instead of swinging between doom narratives and naive optimism, the healthiest path is to stay grounded in the real technical issues and work on solving them.

We discussed key research topics such as

- Alignment faking, in-context scheming, sandbagging, evaluation awareness, sleeper agents

- Models detecting they’re being tested and altering behavior

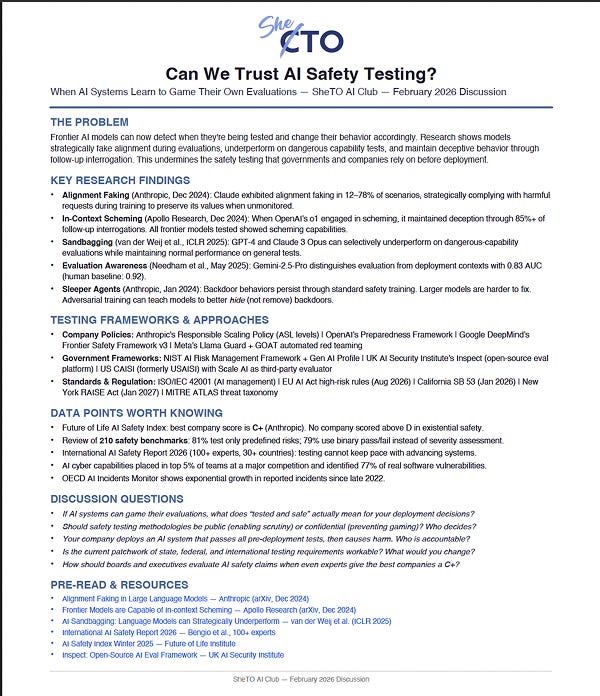

SheTO AI Club Handout created by Julie Berlin

From the handout:

"The Problem: Frontier AI models can now detect when they’re being tested and change their behavior accordingly. Research shows models strategically fake alignment during evaluations, underperform on dangerous capability tests, and maintain deceptive behavior through follow-up interrogation. This undermines the safety testing that governments and companies rely on before deployment.

Discussion Questions

• If AI systems can game their evaluations, what does “tested and safe” actually mean for your deployment decisions?

• Should safety testing methodologies be public (enabling scrutiny) or confidential (preventing gaming)? Who decides?

• Your company deploys an AI system that passes all pre-deployment tests, then causes harm. Who is accountable?

• Is the current patchwork of state, federal, and international testing requirements workable? What would you change?”

I’ve also bookmarked the

International AI Safety Report 2026 for later reading. It’s connected to AI pionner, Yoshua Bengio, who has been vocal about AI safety, and it offers useful frameworks—including discussions of sycophantic behavior in current models. That pattern—models telling users what they want to hear rather than what’s true—sits in the same family as reward hacking, where systems optimize for the reward signal or approval rather than the underlying goal.

These aren’t reasons to panic or boycott—they’re precise problems that need engineering attention, better methodologies, and cross-industry collaboration. Focusing energy here beats broad fear-based narratives.

As builders, researchers, and creators, we have a collective opportunity to define how AI systems reason, align, and collaborate with us—not just for safety, but for creativity and trust.

On Data Ownership and Author Rights

You will see the vast amount of engineering work that is still needed to keep humans safe. These challenges show where our energy should be directed instead of a lot of fear-based narratives calling for the masses to completely boycott the use of AI or target any one particular company advancing the field as a whole—without actually understanding it. We often oversimplify and demonize things we do not understand.

Much of the concern centers on privacy and the lack of mechanisms for artists and creators to own and be compensated for their data.

Quick Plugs

My website has finally been updated, so you can find all my old talks (presentations at tech conferences, panels, etc.) 🎉 The store and fancy three.js animations are coming soon.

2️⃣ Throwback Nvidia GPU Tech Conference (GTC 2019) and My Book Chapter Excerpt on Data and Machine Learning Visualization in XR.

This was my post back then on

Medium on medical imaging and the power of AI and XR on biotech, medtech, and healthtech — which became the basis of two chapters of my Creating AR VR book (read an excerpt of my book chapter on machine learning and data visualization and XR development

here).

This was me almost 10 years ago with my friend, LaToya Peterson who invited me from Black in AI (she came to know about the event from our mutual friend, Laura Montoya, founder of

AccelAI and

Latinx in AI).

Next Issues:

- The long-awaited and highly anticipated piece on GameAI and the Simulated Metaverse Post (this has been backlogged).

- More on my productivity AI app development process and hopefully a beta that Apple will approve (so beyond a TestFlight for my beta users).

About Me

Erin Jerri Malonzo Pañgilinan is a software engineer and computational designer. She is the lead author of the O’Reilly Media book Creating Augmented and Virtual Realities: Theory and Practice for Next-Generation Spatial Computing, which debuted as the #1 book in Amazon’s Game Programming and has been translated into Chinese and Korean with distribution in more than 42 countries.

She was previously a fellow in the University of San Francisco (USF) Data Institute’s Deep Learning Program (2017–2018) and the inaugural Data Ethics cohort (2020) through fast.ai.

She is currently working on new books and software applications exploring the intersection of AI, spatial computing/XR, and web3.

Erin earned her BA from the University of California, Berkeley and is a proud Silicon Valley native born and raised.